In recent years, many governments have pursued AI sovereignty by focusing on specific layers of the technology stack. But various initiatives have demonstrated the challenges of this strategy: consider Australia’s private sector push to create a “national” large language model (LLM), Germany’s incentives to expand chip production, and India’s efforts to assemble a national GPU cluster, among others. As our previous research has shown, the resource intensity and rapid pace of AI development means that only a select few superpowers and middle powers have the scale and breadth of capabilities to sustain a sovereignty strategy over time. Yet, even for most of these countries, AI sovereignty conceived as full-stack autarky remains an illusion.

Even if stack-based sovereignty were attainable, it could prove risky: the strategy implicitly relies on the assumption that today’s compute-intensive, LLM-based AI paradigm will continue to dominate. Yet, future advances in AI architectures and infrastructure pose the risk of massive sunk costs into stack-based moonshots.

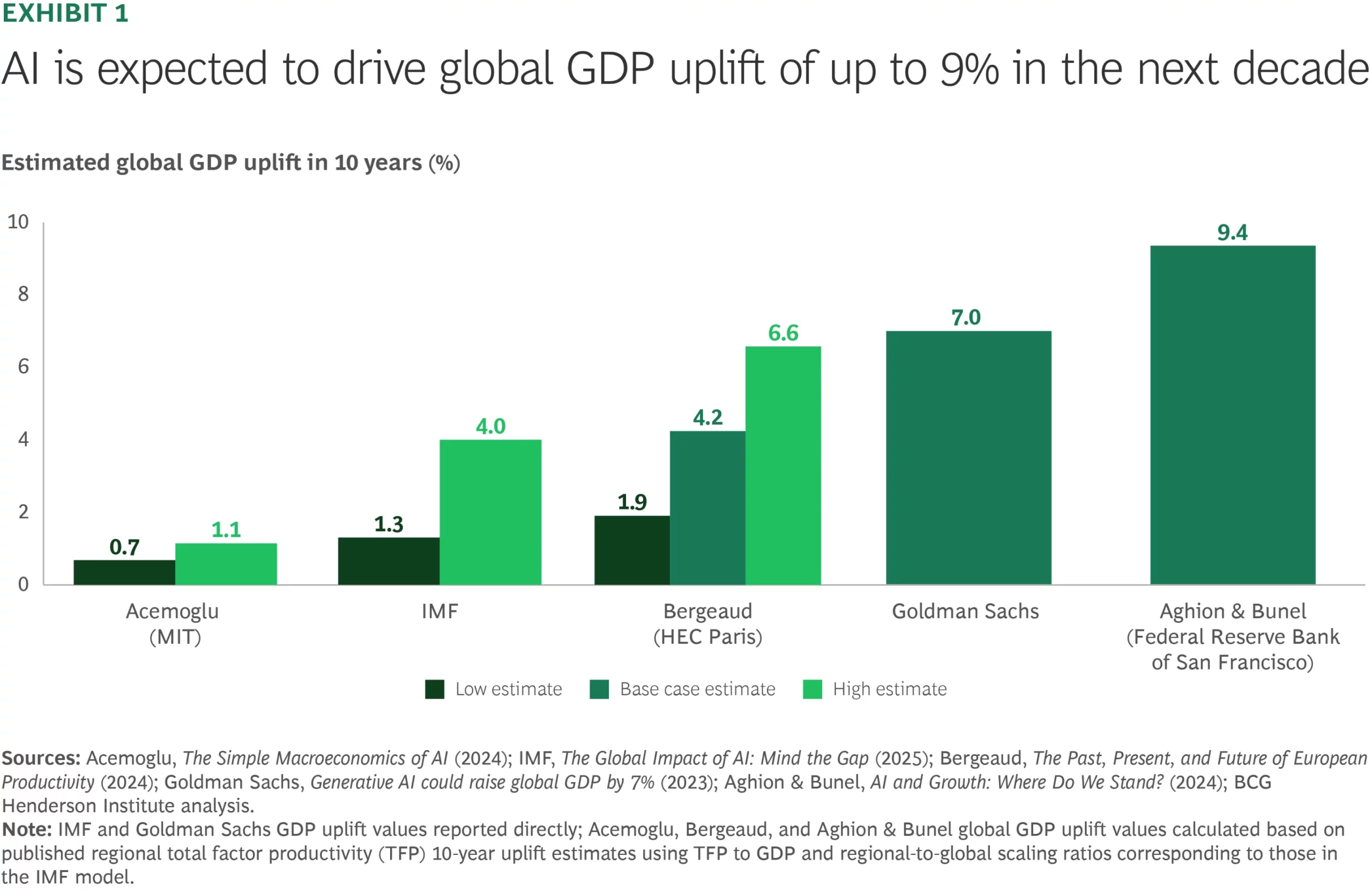

For most countries, a more practical AI sovereignty strategy is built around AI resilience—using, adapting, and governing AI domestically at scale, while minimizing strategic dependencies. Despite divergence among forecasters, even relatively conservative estimates view the potential payoff as significant. The International Monetary Fund (IMF), for example, expects effective AI adoption to boost global GDP by 4% over the next decade—roughly $4.7 trillion (see Exhibit 1). For comparison, that’s nearly the size of Germany’s economy. Countries that lag behind will risk not only slower economic growth but also diminished competitiveness across industries poised to be disrupted by AI.